2.4 Property and Commons

Nonmarket Production

Nonmarket production plays a bigger role in our economy than often realized. Whether it's a parent looking after the children the whole day or people just voluntarily helping each other, a lot of things get done without money ever changing hands. It has also always been true that nonmarket mechanisms were much more important in the production of information than physical goods. There are no voluntary steel manufacturers and we don't just pick up a new car for free because someone feels like producing one. Nonetheless, we rely on a large volume of information everyday that is produced on a voluntary basis. Non-governmental organizations and private foundations are dedicated to solving pressing issues the market doesn't care about and governments don't have resources at hand to solve. In everyday life we obtain counsel and information from colleagues about what film to watch or what road to drive and virtually all of our basic research is funded by governments or nonprofit institutions. With computers and the internet readily available to millions of people, the means of producing and distributing information are now widely held throughout the population. Thus nonmarket behaviour is becoming central to how our information and culture is produced.

As working hours are going down in the economically more developed countries, more spare time is available for voluntary activity. Through information and communication technology, these resources can be used more effectively as people have better access to existing information and have a medium through which they can express themselves, communicate and collaborate with others.

The highest motivation for work is usually thought to be money. However, we are motivated by a wide range of things. We look for social rewards like acknowledgments or higher social standing in our communities. We have intrinsic motivations like pleasure or personal satisfaction when we feel we have achieved something. Even small payments may undermine intrinsic motivations as we might prefer to work for free for a good cause rather than do the same work for a monetary reward.[1]

Peer Production

Resources can be handled either as property or as a commons. Most physical objects and also land are usually considered property while for example the roads network, water or public services are shared within a community and are thus commons. When information is treated as property it is made scarce against its nonrival nature. Proprietary information introduces transaction costs which ultimately hinders overall information production as shown in the previous chapter. Through the internet, a new model of production has evolved which relies heavily on the sharing of information as a commons. It also works radically decentralized. Everyone can drop by, participate and contribute in the domains that interest them personally the most — as opposed to centralized production, where some boss somewhere decides what gets done by whom. This decentralized collaborative production method in which the resources are organized as a commons is called commons-based peer production.[2]

Until now, the most advanced example of large-scale peer production is the development of free and open source software. (1.1 The GNU Project and Free Software and 1.2 GNU/Linux and Open Source) Hundreds of volunteers are collaborating over the internet, using such diverse tools as email, mailing lists and chat. But, first of all, specialised revision control software helps to organize all the code and keeps track of the many changes it is undergoing. To date, the result of these collaborative acts is thousands of software packages that compete with and often outperform the established software industry's products. As software is a finished product that consists solely of information, it is only natural that commons-based peer productions work very well here.

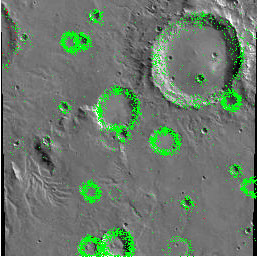

This production strategy was also adopted outside the domain of software. Wikipedia, a multilingual encyclopedia which everybody can edit with only a simple web browser, started in 2001 and continues to grow at a huge pace, hosting over 7 million articles today. Although often criticized to be untrustworthy, vandalism is usually reverted very quickly[3] and the fact that Wikipedia is one of the top ten most-visited websites worldwide proves that it is a very valuable resource.[4] Other peer productions include the news site Slashdot, NASA clickworkers (where volunteers can map martian craters on satellite imagery) or Project Gutenberg (where old texts long in the public domain are scanned and then proofread for errors by many participants, the result being an ever growing library of digitized books freely available over the internet as plain text).

In all these different peer productions, the whole project needs to be broken down in smaller parts that individuals can work on in the limited time they have. So, naturally, some projects work better as peer productions than others. The work on Wikipedia with its many independent encyclopedic articles is easier to distribute among many participants than, let's say, the writing of a book which needs to have a consistent style and structure. However, if parameters are clearly stated from the start and everybody is willing to write one small chapter, a task like writing a book can also be accomplished. As the concept of peer productions is still very young, new organizational methods are being discovered and technical tools built every day to help coordinate work and realize projects never thought possible before.

While some projects need to be centrally controlled and will never work very efficiently as peer productions, commons-based peer productions do have some significant advantages. When the workers themselves choose what to do, they identify much more with their tasks and can spontaneously help out without needing to ask anybody for permission or signing a new employment contract first. This can, however, also easily lead to individuals misjudging their own abilities: an important part of every peer production is thus quality control by other participants. What is the case with the ever increasing amount of information to be found on the internet is also the case for single peer productions: The accreditation and the mapping for relevance and quality are as important as the actual production of the information. But also this work can also be peer produced. Many sites have implemented features for voting the comments or contributions of others up or down. Filters which hide low-rated comments initially are very useful. While the Google search engine ranks sites which are often linked-to from other sites higher in their search results,[5] Wikipedia relies primarily on social mechanisms and favours discussion as a means to reach consensus.

Commons-based peer productions are here to stay. Humans have always shared and collaborated with one another. Of course, not everything is always shared, but when widespread technology facilitates sharing, sharing will happen more often. Information and communication technologies have enabled projects where lots of people can work together although they are spread all around the globe. Peer productions don't draw labour away from the market; instead, they use resources unused by the market and thus form a competition to market production in some domains. Firms can also profit from these mechanisms when working together with nonmarket forces. The border between producers on one side, and consumers and users on the other side, blurs. Increasingly, consumers come to produce what they want themselves, collaborating with like-minded people or companies. New business models and more 'interactive products' are needed in this networked information economy. Relics and business models out of the industrial information economy need to be overcome.

Free Content

Commons-owned information that can be accessed and (re)used by everyone is more valuable to the economy and society as a whole than proprietary information. Thus, commons-based peer productions with their non-proprietary outputs should be welcomed twice. However, there are also peer productions that output proprietary information which can't be reused for further information production as its use is restricted by 'intellectual property' law. YouTube is an example for this, the videos hosted on this user-powered site can't be downloaded again, but are held on the central server where they are meant to reside, flushing ever more advertising revenue into the company's cash-boxes. While there's no doubt that some very interesting and often entertaining content is to be found on YouTube, the works are trapped there and can't be creatively reused.

On the other side, non-proprietary information production enables a free culture (Chapter 2.2). Free content (as in freedom) is any work having no significant legal restriction relative to people's freedom to use, redistribute, and produce modified versions of and works derived from the content.[6] To achieve this, default copyright has to be overridden and the owner of the work has to license it to the public and permit everyone to copy, change and redistribute it.

This strategy was first used by the free software community, and later the emerging free culture movement took those ideals and extended them from the software field to the whole culture. (Chapter 1.1) The most commonly used software license is as said the GNU GPL. For text, especially functional works such as textbooks, the GNU FDL (GFDL) was developed and all of Wikipedia's text is licensed under it. Both are copyleft licenses, meaning they allow redistribution of derivative works under the condition that this happens under the same license, preventing the content from becoming non-free. Also commercial redistribution is permitted.

To provide potential authors with a greater set of choices, the non-profit organization Creative Commons created six licenses of their own, meant for text as well as music, images and video. Now, creators can choose a license and allow copying of their works for noncommercial use only, or changes may be prohibited. The original author, however, always has to be attributed when a work is copied. For artistic works, the Free Art License can also be used.

| License | Attribution required? |

Commercial use allowed? |

Derivative works allowed? |

Copyleft (Share-Alike) | |

|---|---|---|---|---|---|

| GNU | GPL (software) | no | yes | yes | yes |

| GFDL (text) | yes | yes | yes | yes | |

| Creative Commons | Attribution (by) | yes | yes | yes | no |

| Attribution-NonCommercial (by-nc) | yes | no | yes | no | |

| Attribution-NoDerivs (by-nd) | yes | yes | no | no | |

| Attribution-ShareAlike (by-sa) | yes | yes | yes | yes | |

| Attribution-NonCommercial-NoDerivs (by-nc-nd) | yes | no | no | no | |

| Attribution-NonCommercial-ShareAlike (by-nc-sa) | yes | no | yes | yes | |

| Copyleft Attitude | Free Art License | yes | yes | yes | yes |

This flood of different licenses has, however, led to some confusion and even incompatibility. For example, a work licensed under the GNU FDL license cannot be joined with a work under the Creative Commons Attribution-ShareAlike license as both licenses require any modified version of a work to be under the exact same license again.

Everybody agrees that even the most restrictive of the Creative Commons licenses, the Attribution-NonCommercial-NoDerivs license, is better than complete default copyright. However, only two of the various Creative Commons licenses qualify as free content licenses: the Attribution and the Attribution-ShareAlike license.[7] The others impose too severe restrictions on the reuse of the work. On the other hand, works of personal opinion probably shouldn't be altered. In such cases, a bloated license isn't even necessary and it might be sufficient to add a phrase like this: Verbatim copying and distribution of this entire article are permitted worldwide without royalty in any medium provided this notice is preserved.

Sharing Hardware

Not only does information, knowledge and culture get peer produced, but also material resources are shared. That's the way peer-to-peer file-sharing networks work (Chapter 2.2), users sharing the storage space on their computers and their internet bandwidth with one another, thus distributing the hardware costs typically needed to spread such massive amounts of data. But also the computational power of many home computers inter-connected over the internet outperforms the most powerful server farms in existence. Every computer owner connected to the internet can download a free program that runs in the background all the time and calculates data when the computer is idle. These distributed computing projects range from analyzing radio telescope data in Search for Extra-Terrestrial Intelligence (SETI@home) to calculating molecular dynamics simulations that can eventually be used in fighting diseases (Folding@home). Again, the power to perform such high-capacity calculations is achieved through voluntarily pooling resources. The participants don't receive any payment but they are listed on the project's websites in order of their contribution and do it for a goal greater than themselves.

This sharing of hardware only makes sense because technology developed to make it possible for cheap, high-performance hardware to be widely distributed among the population. If really fast network connections were significantly cheaper than fast computational hardware, computers might have been centralized in order to be used economically. We would then have thin clients (a monitor, keyboard and mouse) connected to a remotely located server. If this were the case, then there wouldn't be any excess computer capacities to be used by distributed computing projects.

References

- ↑ Wikipedia:Motivation crowding theory

- ↑ Term coined by Yochai Benkler. Benkler, Yochai; The Wealth of Networks: How Social Production Transforms Markets and Freedom, 2006, p. 60; Wikipedia:Commons-based peer production

- ↑ Studying Cooperation and Conflict between Authors with History Flow Visualizations

- ↑ Wikipedia co-founder Jimmy Wales and the non-profit Wikimedia Foundation that is now operating Wikipedia and its sister projects emphasize on the importance of free knowledge and free software.[1]

- ↑ More information about the Google search algorithm: Wikipedia:PageRank

- ↑ Wikipedia:Free content; Definition of Free Cultural Works

- ↑ Definition of Free Cultural Works: Licenses